Home

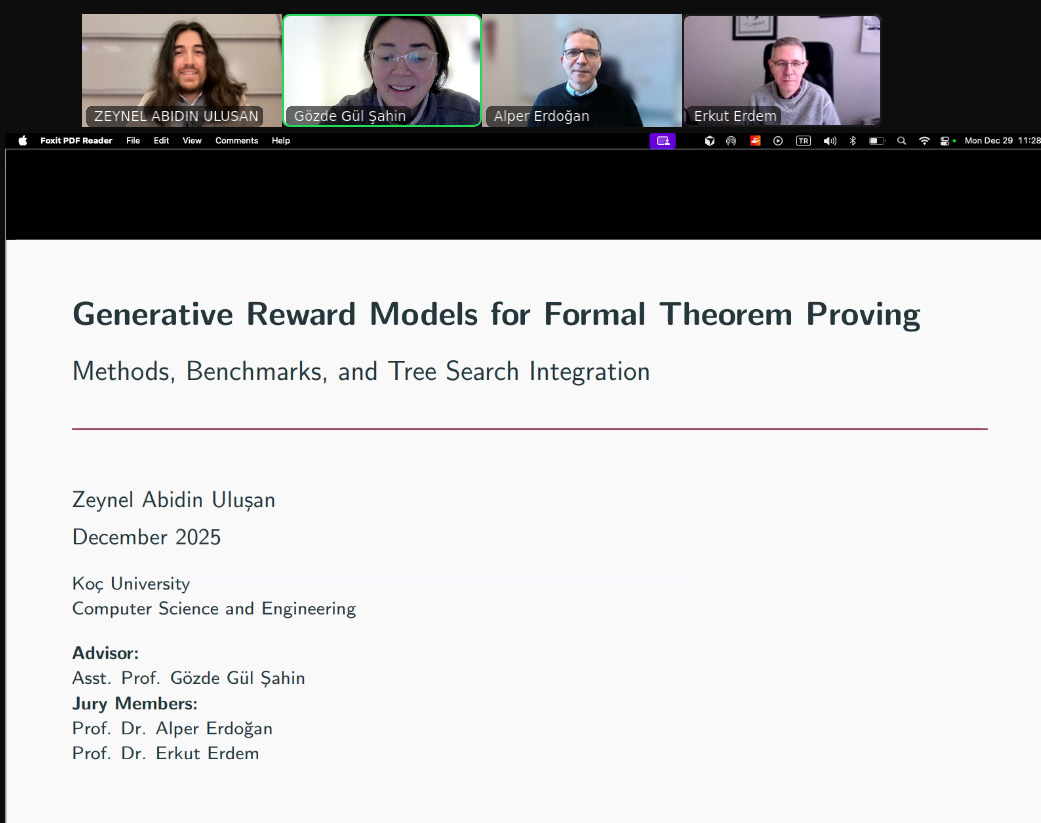

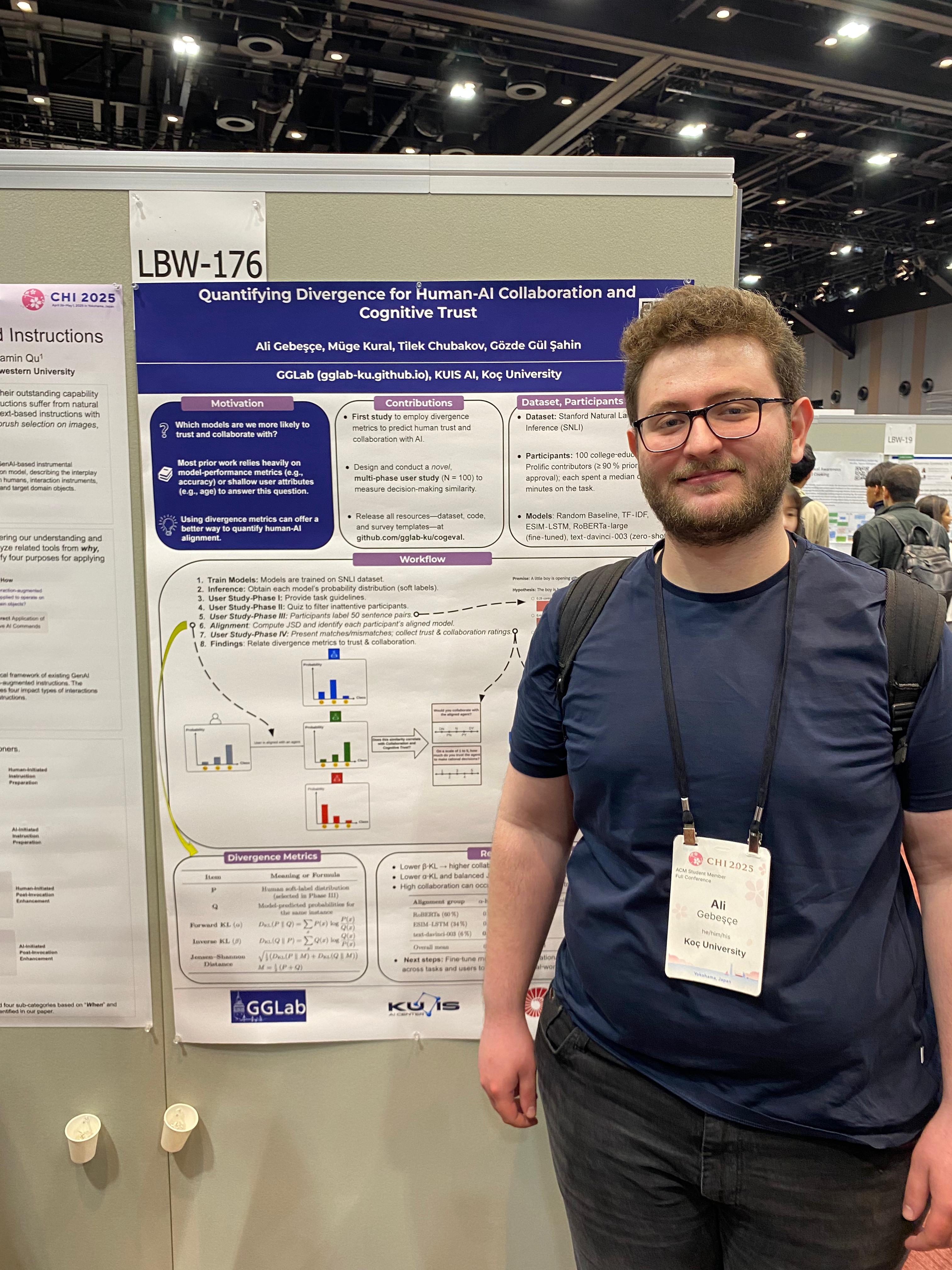

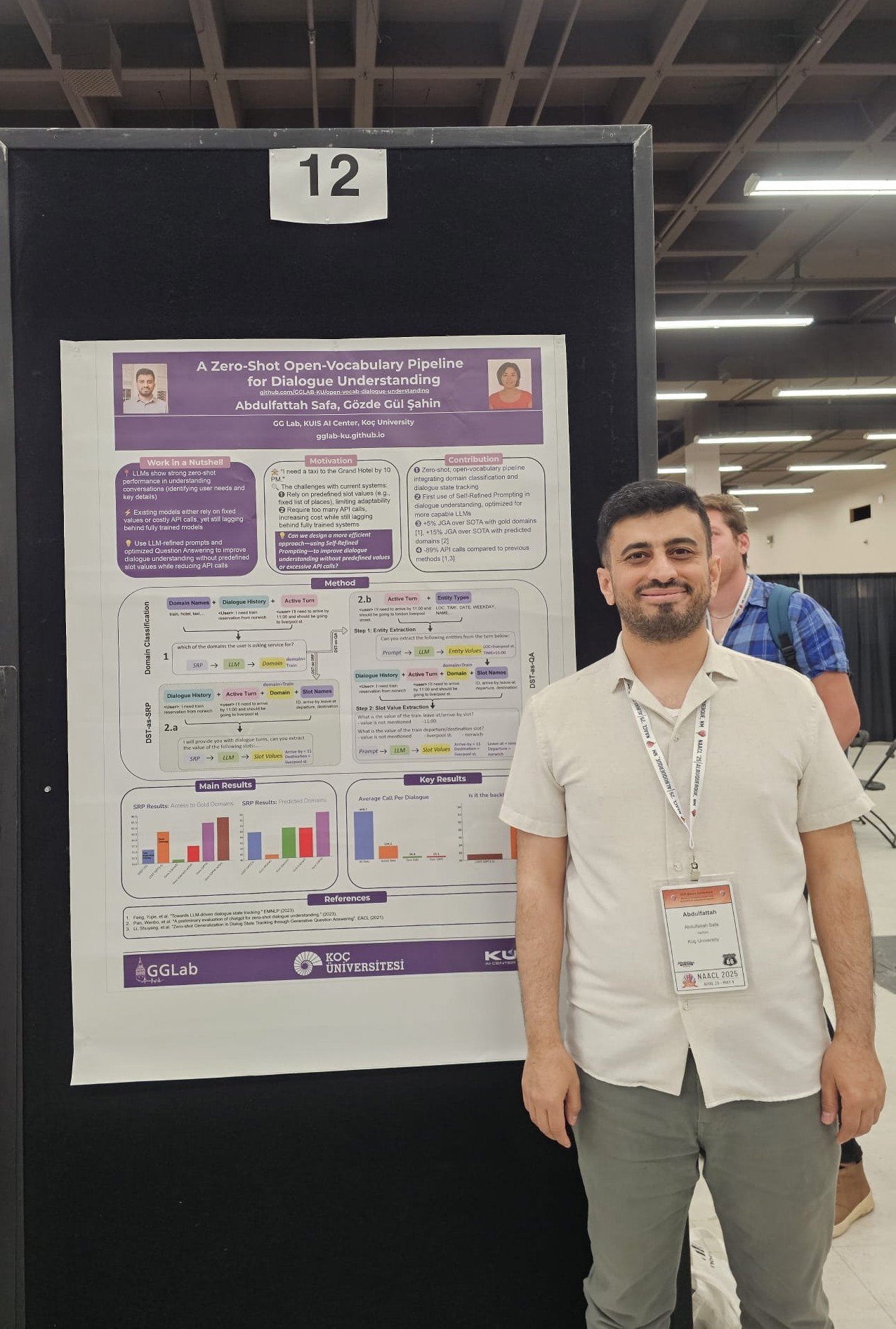

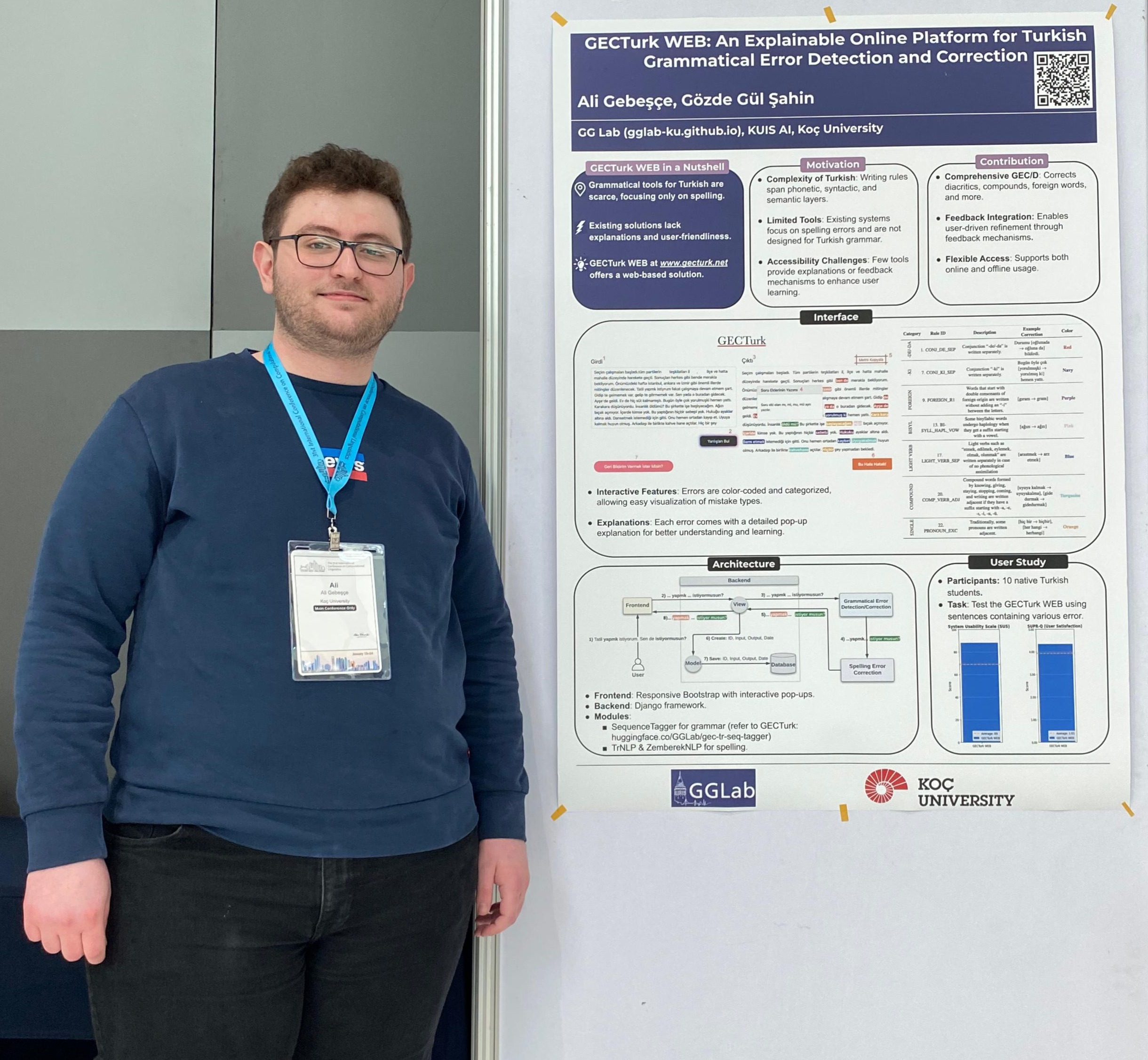

GGLab (pronounced as “cici” in Turkish) is a Natural Language Processing (NLP) research lab led by Prof. Gözde Gül Şahin. We have a keen interest in conducting fundamental research in core methodologies, including but not limited to areas such as learning/performing under low-resource settings and incorporating expert knowledge in language technologies. Additionally, we explore how these methodologies can be applied to various tasks, such as text simplification, linguistic structure analysis, grammatical error correction, dialogue understanding and question answering. The lab has recently moved to FAU Erlangen-Nürnberg University and is part of Intelligent Language Systems Chair. We are affiliated with the Computer Science Department at Koç University and KUIS AI Lab, located in the north of Istanbul, Türkiye. GGLab has been partly funded by Scientific and Technological Research Council of Türkiye via Tübitak 2232B International Fellowship for Outstanding Researchers program, Wikimedia Foundation via Wikimedia Research Fund and Google (Gemini) Academic Program.

Talk to us or join our group if you are interested in these topics.